Engineers developing a responsive prosthetic

Consider your leg. It isn’t a stick on a hinge holding us up as we walk. It is a powered limb, connected to the whole body, actively moving us.

When a person loses a leg, or part of it, that energy is lost, and it’s often not replaced.

Most people with lower-limb amputations use prosthetic legs that strap to the body. Although the relatively cheap devices get the job done, they are dead weights the person must carry while walking.

More expensive and complicated prosthetics provide power, assisting the body in movement. Tuning them to work in concert with the rest of a person’s body is a painstaking trial-and-error process requiring several visits with a clinician.

What if the powered prosthetic could adapt and respond to a person on its own?

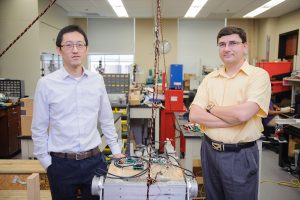

Engineering researchers at The University of Alabama are working to improve powered-prosthetics by linking them with sensors in hopes of creating a prosthetic that seamlessly works with the rest of the body to become a partner in movement without the need for manual tuning.

“Current devices have sensors embedded in the prosthesis, but it’s not about the whole body movement,” said Dr. Xiangrong Shen, a UA mechanical engineering researcher. “The goal is to integrate the leg with the human better. We try to treat them as a coupled system.”

One in 190 Americans is living with the loss of a limb – a number which could double by 2050, according to a 2008 study from St. Olaf College. More than 80 percent of amputations come from complications of the vascular system while another 16 percent stem from trauma.

Shen is leading a project to improve powered prosthetics, one of several initiatives involving leg prosthetics his lab has been involved with over the years.

“The idea that is most interesting to me is combining engineered products with humans,” he said. “You have the close coupling of the engineered product with the human so you can make the human work better without thinking.”

For this project, Shen’s team is automating the tuning process. Instead of back-and-forth calibration of the prosthetic with a clinician, Shen is creating a robotic prosthetic trained by the rest of the body.

“It’s providing higher level feedback to the controller,” he said. “It identifies what people want to do. We’re not directly tapping into the nervous system of humans, instead it’s just inferring people’s intention by taking cues.”

Instead of sensors placed only where the prosthetic meets the body, researchers are developing a prosthetic with embedded sensors to detect motion, direction, such as up or down, and the type of ground underneath, such as sand or a hardwood floor. It would use this information to adjust to the person’s needs.

“If you have a powered prosthetic, then it can be way more convenient for the user, but it has to be smart by definition,” said Dr. Edward Sazonov, a UA electrical engineering researcher. “It has to be smart to recognize what exactly you want the device to do.”

Sazonov is working with Shen to develop a chest sensor to tune the prosthetic. Worn during the early days of use, it would collect data from the movement of the torso to train the prosthetic on how the person moves before making certain steps.

“Your upper body movement tells a lot more about the status of your walking than purely sensors on the prosthesis,” Shen said. “It gives you a global view, instead of a local view on the leg.”

That data will help the prosthetic mimic decision-making humans perform nearly unconsciously as we walk. For example, when we want to stand after sitting, we put weight on our foot and adjust our posture to more easily stand. The way we move our torso influences the rest of the body, and if our walk is off, we sway more. The prosthetic developed at UA would read that sway and adjust itself on the fly so the person can get back to a normal gait for the conditions present.

“The main challenge is to ensure reliable recognition of the wearer’s activities and intent and translate these into actionable commands to the powered prosthetic,” Sazonov said.

After the prosthetic is developed at UA, biomedical researchers at Georgia Tech will test it on humans.

Dr. Shen is an associate professor of mechanical engineering. Dr. Sazonov is a professor of electrical and computer engineering. The National Science Foundation supports this project.